This article captures the key points of a conversation I have quite often with teams involved in exploratory work, and their stakeholders.

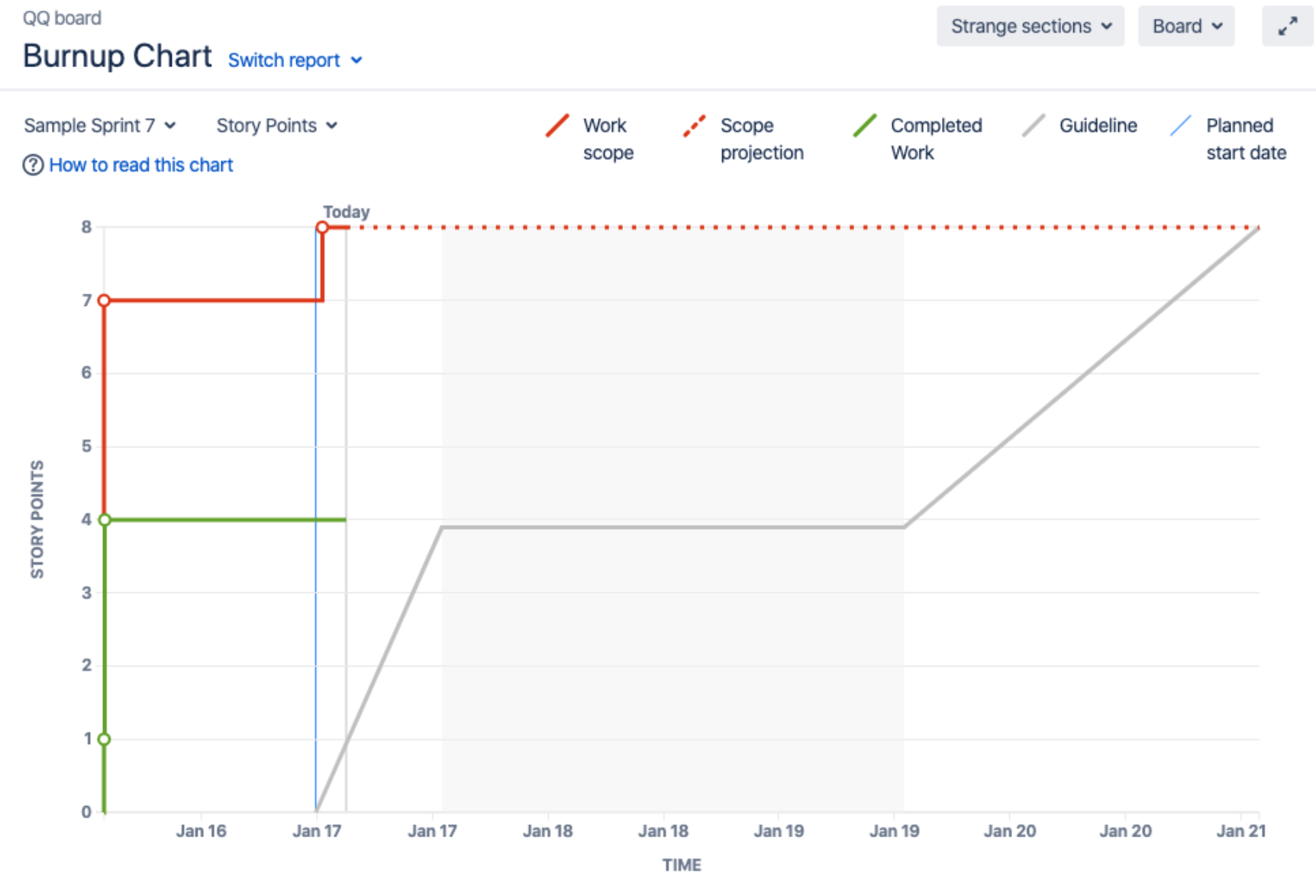

Typical burn up

We’re used to burn up charts looking like the examples in this description from Atlassian – a roughly horizontal scope line which we work towards with a roughly diagonal completed work line.

This is fine if the problem is well understood, the solution is clear and the team can work in an incremental manner towards delivery. (Although with daily reporting, as above, you may not see the forest for the trees.)

Management with burn ups

The classic planning trick with a burn up chart is to extrapolate the (roughly horizontal) scope line and (diagonal) completed work line, to find their future intersection. This is a guide to estimating when a particular initiative or feature might be done. If that time is too far in the future, we can pull management levers such as reducing scope (preferable), or increasing capacity to complete work (if we’re lucky/prepared), or both, to bring the crossover point closer to now.

However, delivery reality is not always that simple. Departures from the typical burn up form complicate reporting and management tasks.

R&D burn ups differ

The scenario I want to examine here is that in which you don’t know your requirements ahead of time, and hence you can’t draw a predictable scope line. I’m calling this a Research & Development (R&D) project, which comes with an R&D burn up, as shown below.

A quick look at this R&D burn up shows us the traditional burn up reporting and management techniques may not be very effective.

That’s because the scope line comes from the “Research” bit of R&D (and, for full points, the completed work line comes from the “Development” bit).

Research could take many different forms, from user experience research to data science research to infrastructure research and more. The common thread is that research is about creating knowledge about the problem or solution that can be exploited in development.

Better reporting for R&D

You might think the R&D burn up looks more like a simple Cumulative Flow Diagram, and you would be right. We care about the rate of scope change, the rate of completing work, and the interaction between the two.

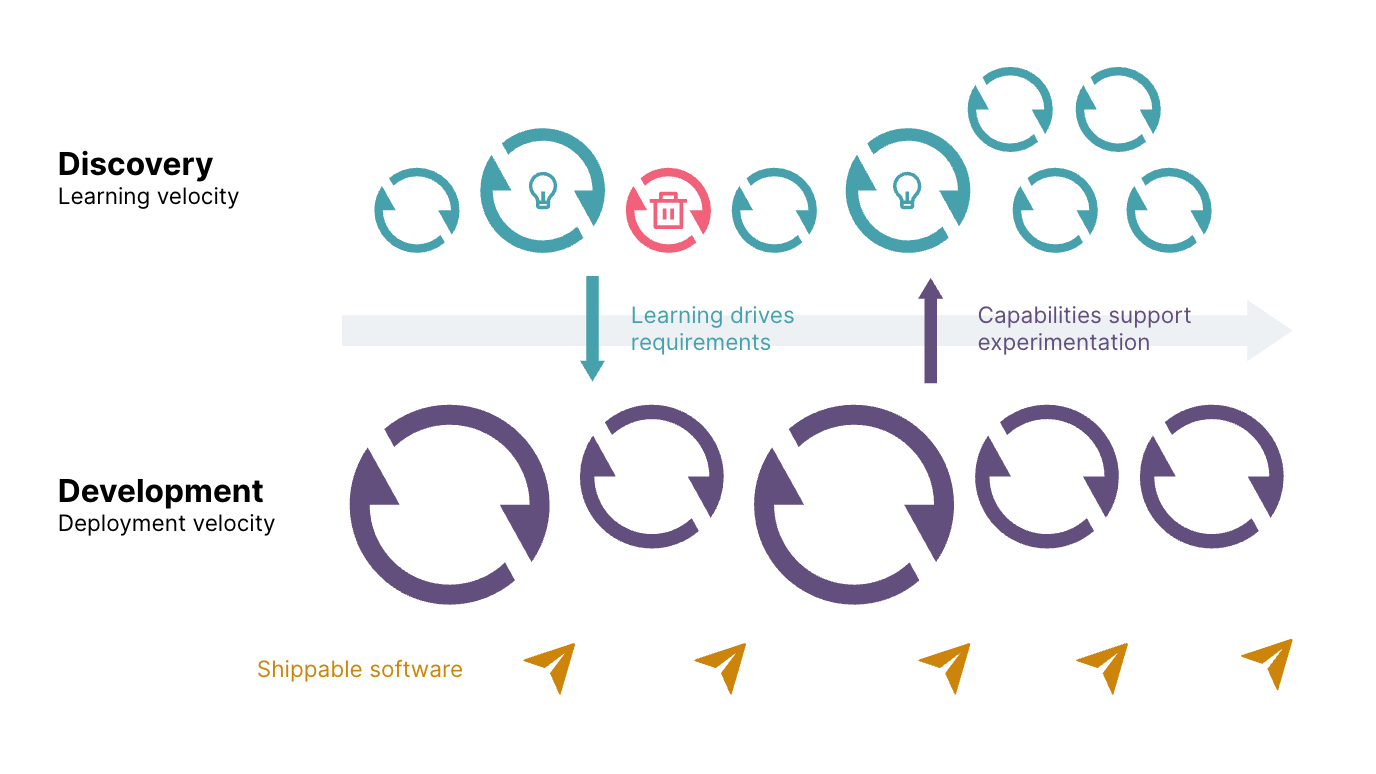

We might also describe an R&D project as “dual track delivery”, with “discovery” substituting for “research”, as illustrated in this Thoughtworks article about an R&D project.

On the development track, we care about deployment velocity (where the term “velocity” in this context means the rate of completing work). You can create a typical burn up for this track, but expect its scope to vary based on the research/discovery track.

On the research/discovery track, however, we care about learning velocity. Learning velocity is not typically measured and reported, but it can be captured on its own burn up. The analogies are:

- Questions, or hypotheses, for scope. These could also be described as known unknowns

- Answers, or results, for completed work

Questions can be captured on cards, prioritised (uncertainty reduction), elaborated (answer criteria), estimated (effort to answer), and so on. See also Hypothesis-Driven Development (HDD). You can walk stakeholders through a wall of questions so they understand and can contribute to the research effort. You can manage this question backlog like a story backlog on the development track, picking up questions to work on, and working on one or a small set at any one time to manage WIP and show learning progress and velocity.

Better management for R&D

The typical management levers remain for accelerating R&D projects, on both tracks: prioritising or reducing the scope, or providing more capacity, but there are some nuances.

If R&D are really happening in parallel, you won’t necessarily be able to predict a delivery date from a development track burn up because the trend lines won’t have an obvious intersection. In this case you rely primarily on the comparative estimates of experts (who’ve done something comparable before). To know what levers to pull, you need to instead ask how much you’re willing to commit to the chance to find a really valuable solution, and your confidence in your search strategy.

You may generate a lot of knowledge about things that don’t work. This doesn’t necessarily mean the team is doing anything wrong, it’s just that when they turn unknowns into knowns, what becomes known is not immediately favourable to further development. You should keep track of this experimentation process in some way though, as described above. This will give confidence you’re looking properly.

How do you know you’re looking in the right places? You need to look in as many places as possible, and in a diverse set of places – you need a portfolio of questions, or experiments, or options. A diverse portfolio includes naive and seemingly contradictory experiments too. Critically review your research backlog for diversity.

Our primary lever for managing uncertainty and aiding progress is timeboxing. On the development track we push small changes frequently so we always have a clear state of the solution. On the discovery track we timebox hypothesis testing so we always have a clear state of knowledge.

Cycle time is thus a key metric for doing things right, and also in aiding our ability to do the right things, i.e. running more experiments from our diverse portfolio.

Three leading R&D measures

To assess the health of R&D projects with leading measures, consider these three primary dimensions:

- Do you ave a diversified list of questions (aka options or experiment portfolio) to pursue?

- Can you conduct experiments in rapid cycles?

- Can you rapidly exploit knowledge upside in development?

Leaders and teams can devote their efforts along these three axes, which are explored in more detail in the follow-up post What to expect when you weren’t expecting an R&D project.

If a traditional burn up wouldn’t work for your project consider these R&D techniques instead.