Category: Visualisation

-

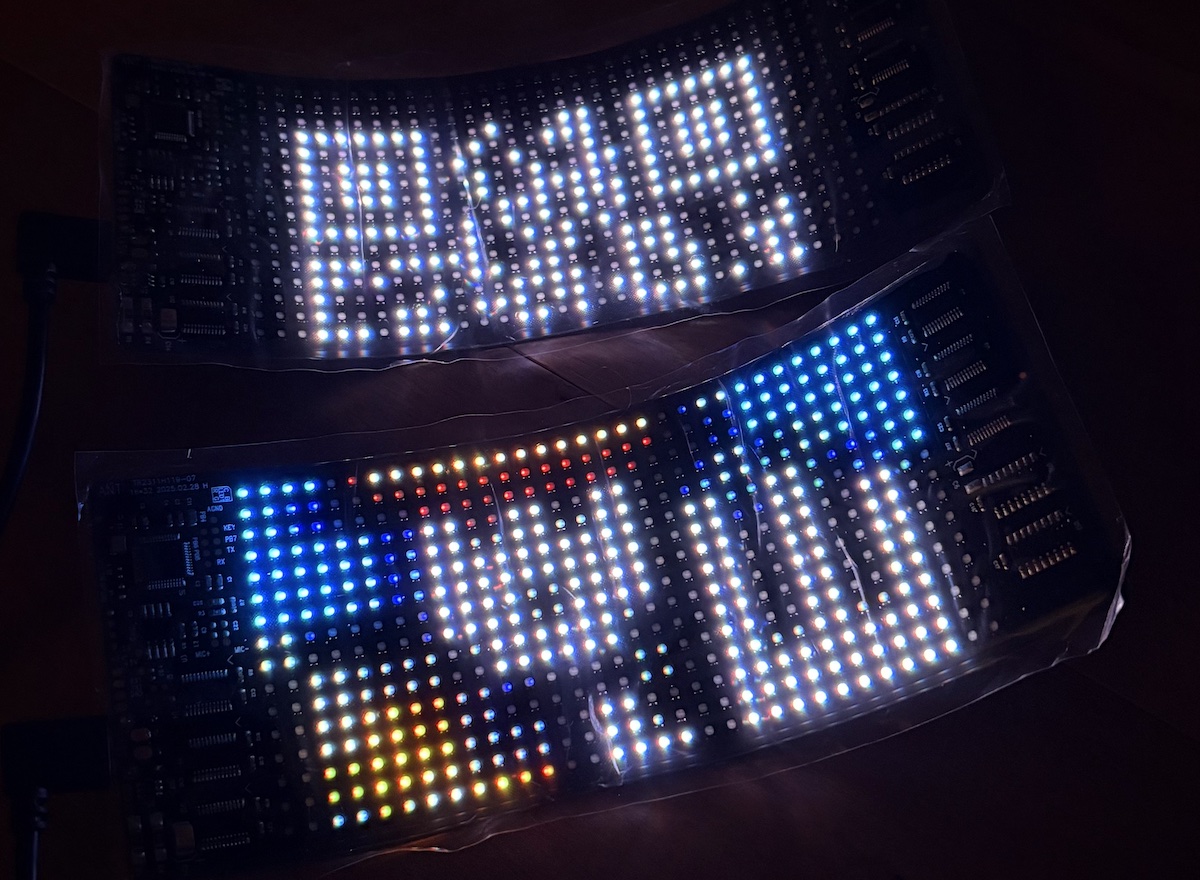

Ignore all previous instructions – Snow Crash cosplay

With an end-of-year party themed Enter the Simulation, I had to cosplay Snow Crash, the book that coined the term “metaverse”. Spolier alert: Skip ahead to the build notes, or read on for the explanation, which Neal Stephenson fans and tech nerds may have already pieced together… The eponymous Snow Crash virus from the 1992…

-

Orienteering map training turns 20

A little over 20 years ago, I was introducing my wife and some friends to orienteering in Jindabyne. With my family involved in the Scottish 6 Days since its 1977 inception, I had been orienteering since before I could walk, and reading orienteering maps was second nature to me. Not second nature to the rest…

-

Reflecting on UI polish

I’ve been meaning to give the trippler UI a glow up for MY2025 and my PyCon AU selection finally provided the impetus. In previously envisioning migrating to a Javascript front-end (vibe coded?), I was possibly making the next step bigger than it needed to be, as I’ve managed to squeeze a bit more out of…

-

Charger hopping

While it’s nice to make good time, when in remote areas, sometimes any route will do. For EV road trip planning in trippler, I first found the direct route, then chose chargers along the route. This approach can fail when there aren’t enough chargers close to the route, as in remote areas. Charger hopping is…

-

A resilient charging planner

Check out the prototype of trippler, an interactive charging planner for resilient EV road trips. Based on my own EV road trip experience, trippler is as much about easily understanding charging options and contingencies, to reduce charger anxiety, as it is about coming up with a single best plan. If you’re looking for the latest…

-

Data complications

Solving EV charger anxiety used maths for better road trips, but skipped over using real data. Let’s fix that, or at least try to… The easy bits To get from A to B in an EV, I used Open Route Service to find a base route to a destination and and Open Charge Map to…

-

Solving EV charger anxiety

Many EV adventures are accessible using the charging network in Victoria, but faulty chargers still have the potential to induce charger anxiety on road trips. Planning apps–EV drivers’ constant companions–may not fully solve this when the reported status of chargers is unreliable and faults are prevalent. As a driver, I want resilient plans that already…

-

EV snow’d tripping

Adventures with EVs often involve big mountain climbs, which consume additional energy, impacting range. I recently had the opportunity to drive climbs from Bright to Omeo and back via Mt Hotham, in Gunaikurnai and Taungurung country, and get a sense for how EVs handle hills. I collected efficiency data for each leg of a road…

-

EV adventuring with resilience

Road trips are the most demanding EV scenario currently in Australia, especially to remote destinations. However, a little planning shows that they are still quite doable. Did the plans survive contact with reality? Mostly. In short, it was a pleasure to drive an EV long distances and the only inconvenience was faulty public charging infrastructure.…

-

LLM WTF

What Token Follows (WTF) when generating text with a Large Language Model (LLM)? This notebook (you can run in Colab) and companion slide deck is my perfunctory (don’t say tokenistic) attempt to demystify GenAI for a general technology audience, specifically: how text is generated by LLMs. The premise of the notebook is to demonstrate and…